Welcome to geeViz¶

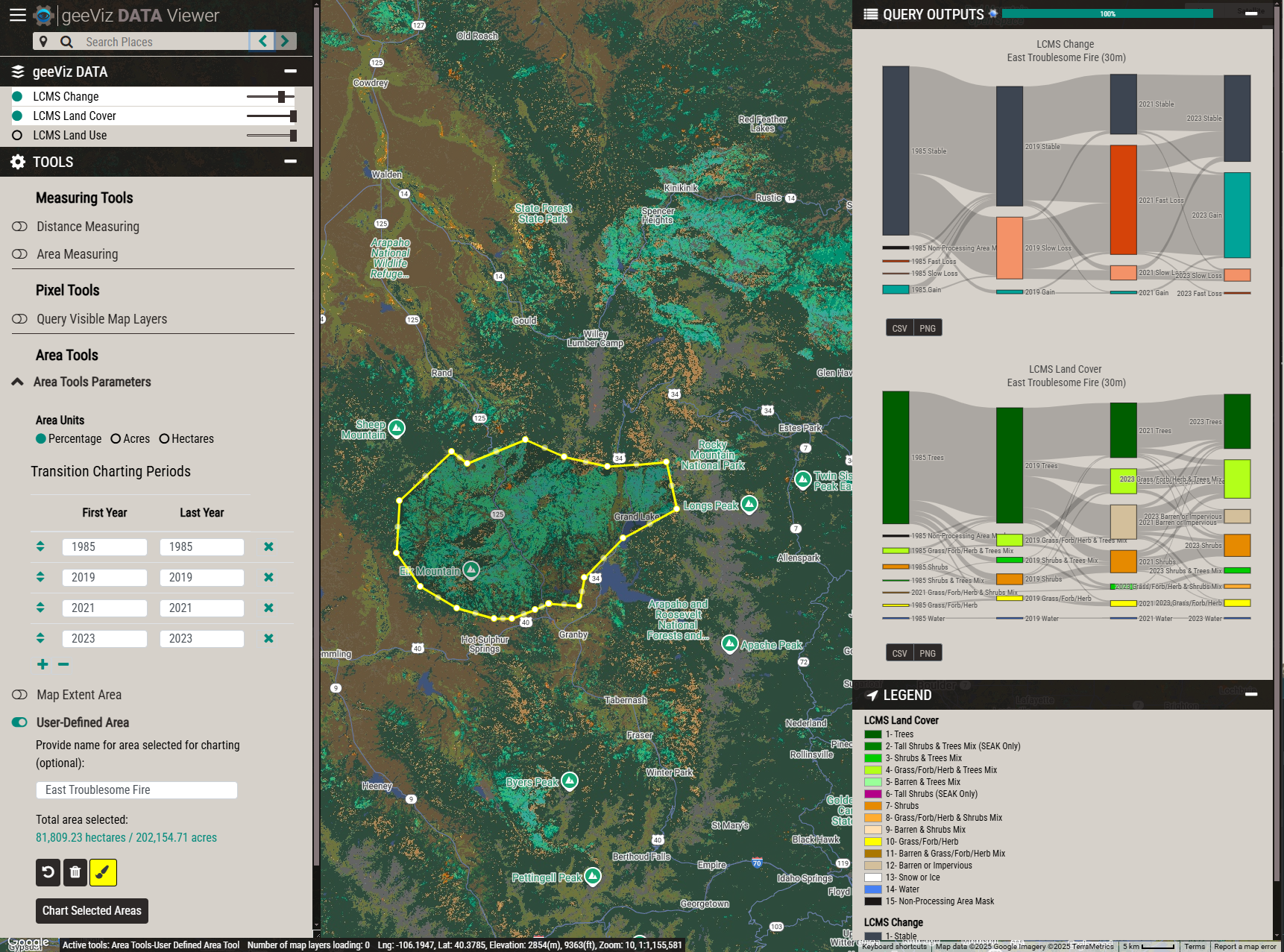

geeViz is a powerful, open source Python toolkit for working with geospatial data in Google Earth Engine (GEE). Developed by RedCastle Resources, geeViz brings interactive mapping, visualization, and streamlined analysis to Python users—making it easy to turn Earth observation data into insight.

What is geeViz?¶

geeViz is designed to fill the gap between GEE’s JavaScript Code Editor and Python workflows, offering a feature-rich map viewer and a robust set of helper libraries that simplify every stage of geospatial analysis in Python:

Explore: Browse Earth Engine

Image,ImageCollection,Feature, andFeatureCollectionobjects in an interactive web map with toggling, querying, charting, and visualization tools.Analyze: Easily run zonal stats, time series extractions, segmentation algorithms, and batch analyses from scripts or notebooks.

Visualize: Instantly create time-lapse animations, custom area charts, and interactive data overlays—all without switching to JavaScript.

geeViz supports workflows in standard Python scripts, Jupyter/IPython notebooks, Google Colab, and Vertex AI Workbench.

Features at a Glance¶

Interactive map viewer for Earth Engine datasets, with pixel querying and area-based statistics.

Intuitive visualization functions for customizing map layers, opacities, RGB composites, palettes, and overlays.

Built-in charting tools for time series, area summaries, and temporal segmentation — including

geeViz.outputLib.chartsfor inline Plotly charts (time series, bar, Sankey, donut, scatter) with auto-detected thematic/continuous data and area format conversion.Utilities for importing and exporting data, including seamless handling of Google Cloud Storage, Drive, no-data values, band resampling, and more.

Scriptable: Automate GEE workflows—geeViz helps you avoid common API pitfalls around filtering, masking, date logic, and data export.

Modular library: Includes specialized modules for imagery processing, change detection, cloud storage, asset management, and more.

AI-ready via MCP: A built-in Model Context Protocol server lets AI coding assistants (Cursor, Claude Code, VS Code Copilot, Windsurf) execute geeViz code, inspect live assets, and look up real function signatures instead of guessing.

Background & Ecosystem¶

Since 2012, RedCastle Resources has been using Google Earth Engine for national- and continental-scale monitoring and change mapping. Originally built to support the Landscape Change Monitoring System (LCMS) Viewer framework for the US Forest Service, geeViz is now a fully open-source Python package that encapsulates the core visualization and processing tools developed for LCMS, plus robust helper libraries for Landsat, Sentinel-2, MODIS, and more.

geeViz is actively maintained and widely adopted for use in:

Joint training programs developed by RedCastle and Google (see LCMS training)

Academic research and enterprise GEE/Python workflows

Tip

geeViz helps you:

Visualize complex GEE datasets interactively, without needing to code in JavaScript.

Avoid common GEE traps: Handles null/no-data values, preserves QA bands, standardizes date filtering, and makes multi-year composites easy.

Accelerate scripting and reproducibility: Write repeatable Python code for geospatial mapping, batch exports, and reporting.

AI-Assisted Development with MCP¶

geeViz includes a built-in MCP (Model Context Protocol) server with 21 tools that give AI coding assistants live access to geeViz and Google Earth Engine. Instead of generating code from training data (which is often wrong or outdated), your AI assistant can look up real function signatures, read actual example scripts, execute and test code, inspect assets, export data, manage tasks, and more — all grounded in the real codebase.

The MCP server works with Cursor, Claude Code, VS Code with GitHub Copilot, Windsurf, and any other MCP-compatible client. Setup takes about two minutes: install geeViz (the mcp SDK is included as a dependency) and add a short config file for your editor. See the MCP Server guide for full details.

geeViz also ships a ready-to-use agent instructions file at geeViz/mcp/agent-instructions.md that tells the AI when and how to use the MCP tools. Copy its contents into your editor’s instructions file (.github/copilot-instructions.md, .cursorrules, CLAUDE.md, or .windsurfrules) to get the best results.

Getting Started¶

Check out the Installation section for how to install geeViz and set up Earth Engine.

Visit the Examples section for ready-to-run scripts and Jupyter notebooks covering beginner to advanced use cases.

The

geeViz.geeView.mapperclass powers geeViz’s map viewer, based on the same code as the LCMS Viewer platform.Set up the MCP Server to let AI coding assistants (Cursor, Claude Code, etc.) execute geeViz code and inspect live Earth Engine assets.